For the past couple months I’ve been building a system to help find hidden innovation opportunities for hydrocephalus shunts — the tiny tubes that drain fluid from the brains of kids and adults with hydrocephalus. These devices fail constantly (about half need revision surgery within 2 years), and the core failure modes — clogging, infection, over-drainage — are hard unsolved problems.

My bet was that solutions already exist in other industries but nobody in neurosurgery would ever find them, because the vocabulary is completely different. A desalination engineer solving “membrane fouling in brackish water” is working on the exact same physics as a neurosurgeon dealing with “protein occlusion of a ventricular catheter” — but they’d never cite each other’s work, never attend the same conference, never use the same search terms.

So I loaded 9 million patents and 357 million academic papers into a database and started building tools to find these hidden connections.

What worked and what didn’t

The brute force approach — embed everything as vectors, search by similarity — mostly failed. Not because the infrastructure couldn’t handle it It failed because embedding models capture vocabulary similarity, not functional analogy. A desalination paper and a catheter paper use different words, so they land in completely different regions of the search space. The math can’t see that they’re solving the same problem.

What worked better was hand-building a “serendipity harvester” — a curated list of fields that share underlying physics with catheter design: desalination membranes, inkjet printer nozzles, marine anti-fouling coatings, heat exchangers, microfluidics, even dairy processing equipment. All fields where the core challenge is “keep small holes open in fluid where stuff wants to stick.” But this only finds what I already knew to look for. It can’t discover a connection I haven’t imagined.

The unlock: LLMs as analogy engines

The breakthrough came from thinking about the problem differently. I’d been reading David Deutsch’s The Beginning of Infinity, and his central argument stuck with me: all progress comes from the quest for good explanations — explanations that are hard to vary, where every detail plays a functional role. A good explanation of why a membrane fouls isn’t about the specific membrane or the specific fluid. It’s about the underlying physics: surface energy, flow regime, particle adhesion mechanics. Those explanations have reach — they extend far beyond the context where they were first discovered.

That reframing changed what I was looking for. The problem with embeddings isn’t just that they capture vocabulary similarity instead of functional analogy. It’s that they can’t see explanations at all. They match words, not the functional structure underneath. What I needed was something that could recognize when two problems in different fields share the same hard-to-vary explanation — the same underlying physics — even when the vocabulary is completely different.

It turns out that large language models are actually good at exactly this. Not because they understand physics (they don’t), but because they’ve absorbed enough scientific text to recognize when descriptions from different fields are pointing at the same functional structure.

If you describe a problem to an LLM — “protein fouling on silicone surfaces in slow-flowing cerebrospinal fluid” — it can generate dozens of reformulations in other fields’ vocabularies:

- biofilm adhesion prevention on polymer membranes in low-flow desalination

- milk protein deposition on food-grade silicone tubing

- platelet adhesion on silicone cardiovascular implants

- scale inhibition on polymer heat exchanger surfaces

Each of those is a search query that finds papers I’d never find with my own vocabulary. And then the LLM can read what comes back and judge: “is this actually the same underlying problem, or just superficially similar?”

This works right now with the data I already have. The 357 million papers are indexed for text search. The LLM generates the cross-domain queries. No additional infrastructure needed — just a different way of using what’s already built.

The other direction: building the graph from the bottom up

The LLM-as-analogy-engine approach works backwards from a specific problem you already care about. You describe your problem, the model reformulates it in other fields’ vocabularies, and you search. It’s powerful, but it’s reactive — you only find connections to problems you’re already looking at.

There’s a complementary approach that works in the other direction: systematically decompose every patent and paper into its functional components — what problem is being solved, under what constraints, using what methods — and organize those into a structured, evolving graph.

I’ve been experimenting with this. For the hydrocephalus work, I extracted problem frames from several hundred patents: the explicit problem statement, the constraints any solution must satisfy (biocompatibility, sterilization survivability, durability in protein-rich fluid), and the methods used to address them (surface chemistry, coatings, geometry optimization, drug elution). Then I clustered the constraints into a taxonomy — about 30 groups emerged from thousands of individual constraint statements.

What gets interesting is what you can do with this structure once it exists. You can score patents from completely unrelated fields by how well their constraint profile overlaps with your target problem. A thrombectomy catheter patent scored 0.75 against hydrocephalus shunt requirements — not because it mentions shunts, but because it addresses the same set of constraints: flow detection, pressure measurement, biocompatibility, sterilization, MRI safety. The connection is invisible in the text but obvious in the constraint graph.

Now imagine doing this not just for one problem, but continuously across entire fields. You’d have a living map of what problems are being worked on, what constraints keep showing up, which methods are overused versus underexplored, and where money is flowing relative to the difficulty. You could track thematically new problems as they emerge — see the first patent filings in a space before the VC funding shows up. You could see when a method perfected in one industry has high constraint overlap with an unsolved problem in another, even if nobody in either field has made the connection.

The two approaches — working backwards from a specific problem and building the graph forward from the data — reinforce each other. The top-down search finds connections you care about right now. The bottom-up graph reveals connections nobody thought to look for yet.

Then: what if you add money?

Patent and paper searches tell you what’s technically possible. But they don’t tell you what matters — what’s actually valuable, what’s moving, what’s stuck.

Money is an imperfect signal, but it’s the best proxy we have for how much humans value solving a problem. And the further downstream the money is spent, the stronger the signal.

Move upstream and the signal gets more speculative but also more forward-looking:

- Consumer/enterprise spend tells you the problem is real and painful

- Corporate R&D spend tells you it’s hard and worth solving

- M&A tells you incumbents believe something is about to change

- VC funding tells you outsiders see an opening

- Grants tell you the science is moving

So if you layer these money signals on top of the cross-domain problem map, you get something much more powerful than either alone. The science tells you what’s possible. The money tells you what’s valuable and how certain we are. The cross-domain matching tells you what’s overlooked.

The bigger idea: a problem graph

What I’m actually building toward isn’t a search tool. It’s a structured graph of human R&D organized not by field, not by keyword, but by functional problem:

PROBLEM: prevent accumulation of material on passive surface in aqueous flow

|

+-- appears in: medical devices, desalination, marine, food processing,

| semiconductor fab, oil & gas

|

+-- approaches tried: zwitterionic coatings, PEG grafting, surface

| texturing, biocide elution, geometry optimization

|

+-- what's stuck: nothing survives >2 years in protein-rich biological

| fluid; sterilization degrades surface modifications

|

+-- what's moving: 3 papers in last 6 months on covalent grafting

| variants; NIH grant on plasma-deposited coatings

|

+-- best evidence: 10-year field data from RO membrane industry

| (zwitterionic approach, but untested in biological fluid)

|

+-- money pointed at it: $340M in VC funding across 6 companies in

3 industries in the last 18 monthsThis graph doesn’t exist anywhere. The information exists — scattered across millions of papers, thousands of patent filings, hundreds of news articles about funding rounds — but nobody has organized it by functional problem and made it queryable.

Why this becomes a machine-to-machine service

For the next few years, this is data for humans — corp dev teams doing acquisition scouting, VCs doing due diligence, R&D directors figuring out what to license from adjacent industries. That’s a real business.

But the longer-term version is more interesting — and probably inevitable.

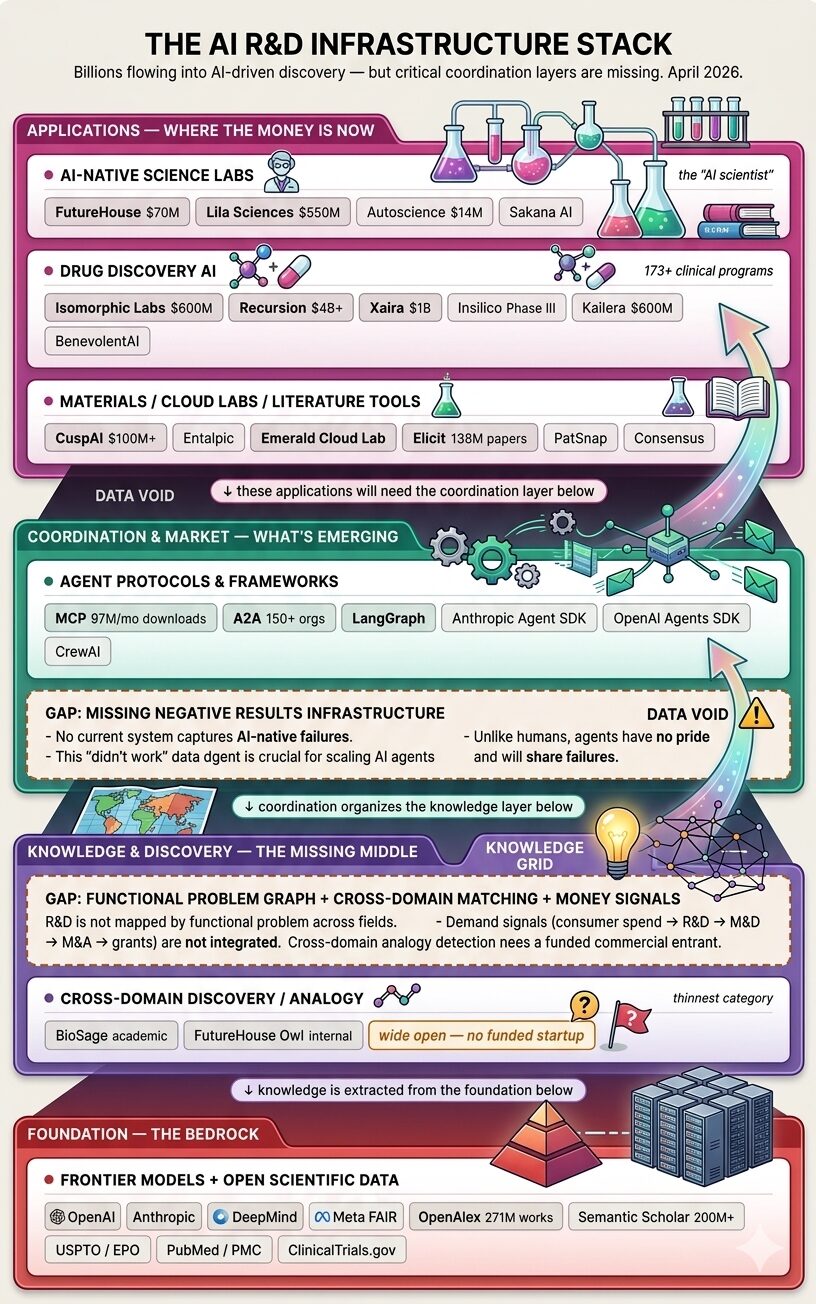

To understand why this matters, look at where the money is flowing right now. Billions are pouring into the applications layer — AI-native science labs, drug discovery, materials design. But the coordination and knowledge layers underneath are almost empty. The infrastructure these applications will need to work together barely exists.

AI is increasingly being applied to R&D problems — not just one model reading papers, but systems that design experiments, run simulations, and test hypotheses. This doesn’t mean science moves into an air castle of pure compute. Most real problems still need physical laboratories, wet chemistry, animal studies, clinical trials. Robotics and cloud labs like Emerald get us part of the way, but the physical world remains the bottleneck for validation.

What can be accelerated is the knowledge work that surrounds those experiments: figuring out what to try, what’s already been tried, what failed, and what adjacent fields have already solved. That’s where the coordination problem lives. And that infrastructure doesn’t exist yet.

Even at today’s scale, the knowledge work around R&D needs three things it doesn’t have:

A way to avoid redundant work. Right now there’s no shared registry of “what’s been tried and what happened.” Teams in different fields re-derive the same results independently. Negative results — which humans rarely publish — are especially critical. Most of R&D is learning what doesn’t work, and that information is almost entirely lost today.

A way to decompose and find prior art. A researcher working on “make a catheter that doesn’t clog” hits a sub-problem: “need a surface coating that survives gamma sterilization.” Instead of solving this from scratch, they should be able to discover that the food packaging industry solved polymer coating stability under gamma radiation in 2019. The solution exists — it’s just invisible across the field boundary.

A way to know what’s worth working on. A researcher choosing between possible approaches needs a value signal. Which problems have real demand behind them? Which approaches have already failed, and under what conditions? Where is the frontier genuinely stuck versus merely under-resourced?

The operating system analogy

What this amounts to is an operating system for AI-driven R&D:

- The map is the filesystem — it tells you what exists and where to find it

- The market is the scheduler — it decides what runs next, on what resources, and how results flow back

- Negative results are garbage collection — they prune the search space so other agents don’t waste cycles on dead ends

- The money stack is the priority system — downstream consumer spend sets the base priority, upstream speculation adjusts it

The map is what you’d build first. It can serve humans now and agents later. The market emerges when agents start actually doing the work and need to coordinate at machine speed. But the map has to exist first — you can’t build a scheduler without a filesystem.

What models will and won’t replace

A fair challenge: won’t the big labs’ models just absorb OpenAlex, PubMed, USPTO, and everything else into their weights? If so, why build an external map?

Models will handle: general domain knowledge, vocabulary translation across fields, ad-hoc analogy detection, reading and summarizing individual papers, generating hypotheses and experimental designs.

Models won’t handle:

- Recency. Training has a cutoff. The paper published Tuesday isn’t in the weights.

- Exhaustive recall. “Find every approach that’s been tried for X” requires completeness. Models give you 5 good examples and miss 40.

- Counting and trends. How many papers this quarter? Is funding accelerating? Models hallucinate numbers.

- Multi-agent coordination state. A thousand agents working related problems need a shared mutable record. Model weights are frozen between training runs.

- Provenance. You need to trace claims back to the specific paper, protocol, and conditions.

Boundary conditions are where models fail and indexes matter

Models know the center of the distribution — the typical case, the common knowledge, the general principle. They lose the tails — the edge cases, the precise conditions under which something breaks.

And the tails are where the value is.

In R&D: the model “knows” that zwitterionic coatings resist protein fouling. But the specific finding that they delaminate under gamma sterilization at 25 kGy in CSF-like fluid after 72 hours — that boundary condition is what determines whether the approach actually works. The model compresses this away. An index preserves it.

The pattern generalizes: general knowledge compresses into weights; boundary conditions require retrieval. This is true today and will likely remain true, because the long tail of specific, conditional, falsifiable facts grows faster than any model can internalize.

Why I’m excited about this

Most “AI for science” tools help scientists search within their field faster. That’s useful but incremental — it’s a better PubMed. The interesting opportunity is helping scientists (and eventually AI systems) search across fields for functional analogies that no one in their domain would think to look for.

The fact that desalination engineers have 10 years of field data on the exact coating chemistry that could fix pediatric brain shunts — and nobody in neurosurgery knows about it — is a market failure in knowledge. It’s not a data problem (the papers exist) or a search problem (Google Scholar exists). It’s a translation problem. The fields don’t share vocabulary, so the connection is invisible.

LLMs can do that translation. And a structured problem graph can make every such connection discoverable, continuously, at machine speed.

That’s what I want to build.

Leave a Reply